Industria centrelor de date traversează un moment similar cu cel al energiei regenerabile de acum două decenii: un val tehnologic accelerat, nevoia de capital semnificativ și un cadru de reglementare care trebuie să țină pasul cu investițiile. În acest context, Monsson mizează pe un model integrat – energie regenerabilă, stocare prin baterii (BESS) și centre de date co-locate – pentru a poziționa România pe harta europeană a infrastructurii critice pentru AI și cloud.

În acest interviu, Sebastian Enache, Head of M&A Monsson, explică lecțiile învățate din dezvoltarea pieței regenerabile, rolul stocării în susținerea consumatorilor mari de energie și ambiția companiei de a dezvolta o platformă scalabilă de centre de date de până la 200 MW în mai multe regiuni strategice ale țării.

Ca informație colaterală, Sebastian Enache va fi prezent și pe scena DataCenter Forum 2026, în cadrul unui panel care va contura similitudinile și sinergiile dintre sectorul energetic și cel al centrelor de date.

DataCenter Forum: În energie regenerabilă, România a trecut prin etape de maturizare și standardizare. Ce lecții din dezvoltarea pieței de energie regenerabilă considerați că ar putea fi aplicate industriei de centre de date locale, mai ales în ceea ce privește autorizarea și integrarea în rețea?

Sebastian Enache, Head of M&A Monsson: În energie regenerabilă, România a început să construiască încă din 1999 o direcție strategică, iar în 2003 au fost instalate primele turbine eoliene de capacitate mare pentru acea vreme. Deși existau discuții și despre parcuri fotovoltaice, tehnologia solară nu era atunci suficient de performantă și competitivă, astfel că prioritatea a fost acordată eolianului, soluția cea mai eficientă în acel context. După aproape 25 de ani, vedem că ambele tehnologii au evoluat semnificativ, sunt standardizate, bancabile și aproape la fel de răspândite.

Lecția principală este că orice val tehnologic are momente critice, strategice, în care cadrul de reglementare, capacitatea administrativă și curajul investitorilor fac diferența dintre stagnare și accelerare. La începutul anilor 2000, toată lumea vorbea despre regenerabile, însă puțini aveau experiență reală, legislația era incompletă, iar procesele de autorizare erau neclare. Doar câțiva investitori curajoși au ales să intre într-un domeniu încă nematurizat, asumându-și riscuri semnificative.

Astăzi văd o paralelă evidentă cu industria centrelor de date. Dacă în regenerabile vorbim despre echipamente mari, cu durată de viață de 20–40 de ani, care trebuie să producă energie în mod stabil și predictibil, în zona de data center discutăm despre o infrastructură critică, la fel de complexă și gândită pe termen lung, dar care găzduiește tehnologii ce se schimbă la 2–3 ani. Serverele pot fi înlocuite relativ rapid, însă infrastructura energetică, de răcire și conectivitate rămâne o investiție pe termen lung și necesită predictibilitate legislativă și operațională.

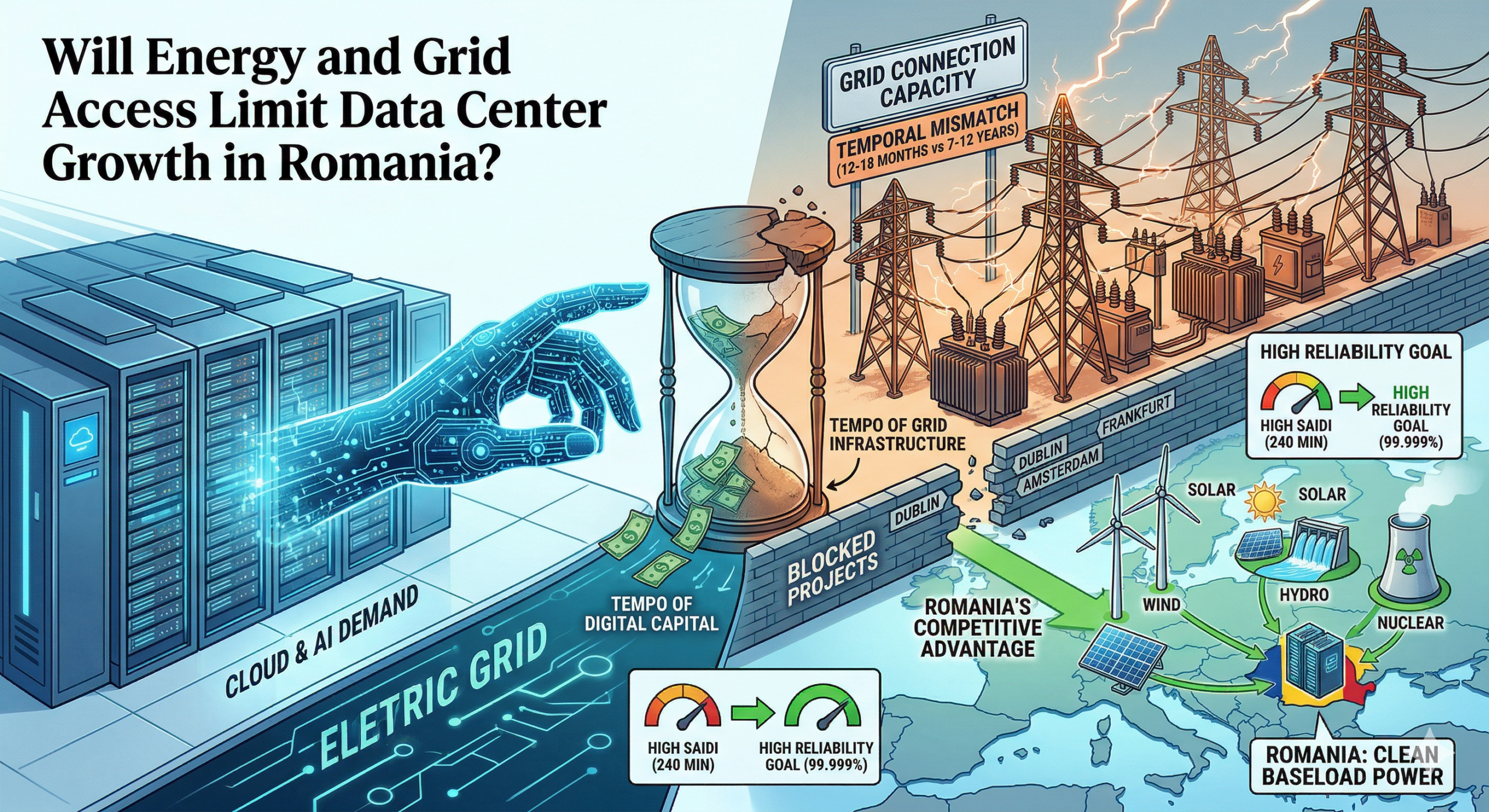

Din experiența regenerabilelor, o lecție esențială pentru centrele de date este importanța unui proces de autorizare clar, coerent și digitalizat, precum și a unei strategii transparente de integrare în rețea. Lipsa de coordonare între investitori, operatori de rețea și autorități poate întârzia proiecte critice ani de zile. În schimb, planificarea anticipată a capacităților de rețea și definirea unor zone prioritare de dezvoltare pot accelera investițiile și reduce riscurile.

Consider că există o convergență naturală între cele două industrii. Profesioniștii din zona centrelor de date trebuie să colaboreze activ cu autoritățile, nu doar pentru a evidenția importanța acestor investiții, ci și pentru a contribui la definirea unui cadru legislativ adaptat realităților tehnologice. Construcția rapidă, conectarea eficientă la rețea și amplasarea în zone adecvate, cu infrastructură energetică robustă, sunt elemente critice pentru ca România să valorifice acest nou val de dezvoltare, așa cum a făcut, în cele din urmă, și în domeniul energiei regenerabile.

DCF: Există un mare interes la Data Center Forum pentru investiții durabile. Cum vedeți rolul stocării de energie (BESS) și sursele regenerabile în susținerea operațiunilor unor centre de date cu consum intensiv – de exemplu pentru AI sau cloud – în România și Europa?

Sebastian Enache: Interesul pentru investiții durabile în zona centrelor de date este firesc, mai ales în contextul creșterii accelerate a consumului generat de AI și cloud. Din perspectiva mea, stocarea energiei prin baterii (BESS) și sursele regenerabile vor juca un rol esențial în susținerea acestor operațiuni în România și în Europa.

Tehnologia BESS este relativ nouă la scară largă, însă în ultimii 5 ani a cunoscut o dezvoltare spectaculoasă, atât din punct de vedere tehnologic, cât și economic. Dacă în trecut integrarea unui sistem de stocare nu era fezabilă financiar pentru majoritatea proiectelor, astăzi aproape toate parcurile eoliene și fotovoltaice moderne includ BESS, iar în paralel vedem dezvoltarea de sisteme stand-alone dedicate serviciilor de sistem. BESS nu mai este doar un accesoriu, ci un element care oferă flexibilitate, stabilitate și predictibilitate rețelei.

În acest context, paralela cu centrele de date este evidentă. Până recent, BESS nu era perceput ca o componentă esențială pentru un data center, unde accentul era pus pe redundanță clasică – generatoare diesel și UPS-uri. Însă, pe măsură ce consumul crește și volatilitatea prețurilor energiei devine o realitate, sistemele de stocare vor deveni, în opinia mea, o componentă standard. De ce? Pentru că permit optimizarea costurilor, prin achiziția de energie la prețuri reduse în perioadele de surplus și utilizarea acesteia în intervalele de vârf. În același timp, oferă o sursă suplimentară de redundanță și pot susține integrarea directă a producției regenerabile locale.

Mai mult, BESS facilitează conectarea eficientă a unui centru de date la un mix energetic verde, reducând impactul asupra rețelei și crescând stabilitatea operațională. Pentru centrele de date AI, unde cererea de putere este mare și constantă, combinația dintre regenerabile, stocare și un management inteligent al energiei poate face diferența între un proiect bancabil și unul vulnerabil la riscuri de preț și disponibilitate.

Viziunea Monsson este clară: un centru de date este mai bine poziționat lângă un hub energetic regenerabil, integrat cu capacități de stocare, decât ca un consumator izolat în rețea. Prin corelarea investiției inițiale în producție și stocare cu profilul de consum al centrului de date, randamentul global al proiectului poate crește semnificativ – estimările noastre indică un potențial de peste 25%. În acest fel, sustenabilitatea nu este doar un obiectiv de imagine, ci devine un avantaj competitiv real.

DCF: Monsson este cunoscută în principal pentru proiectele din energie regenerabilă și stocare. Ce v-a determinat să vă extindeți spre infrastructura de centre de date și ce sinergii vedeți între aceste două industrii? (Mă gândesc la un modelul integrat de energie + baterii + centre de date)

Sebastian Enache: Știu, Monsson este cunoscută în principal pentru dezvoltarea de proiecte în energie regenerabilă și stocare, însă extinderea către infrastructura de centre de date reprezintă o evoluție naturală a viziunii noastre. Așa cum am menționat anterior, obiectivul nostru este să atragem cât mai mult consum energetic relevant în proximitatea hub-urilor noastre de producție. Credem că viitorul aparține modelului integrat – energie + baterii + centre de date.

În esență, secretul constă în a aduce consumul lângă producție. În modelul clasic, energia este produsă într-o zonă, transportată pe distanțe mari și consumată în altă parte, ceea ce implică pierderi, costuri ridicate de racordare și presiune suplimentară asupra rețelei. Prin poziționarea centrelor de date în apropierea parcurilor eoliene și fotovoltaice, integrate cu sisteme BESS, putem optimiza întregul lanț investițional. Reducem costurile de infrastructură de transport, scădem timpul de implementare și creăm un ecosistem energetic mult mai eficient.

Pentru dezvoltatorul de centre de date, avantajul este unul strategic: acces la energie competitivă, predictibilă și, din ce în ce mai important, verde. Pentru noi, ca dezvoltator de capacități regenerabile și stocare, integrarea unui consumator mare și constant precum un data center crește stabilitatea financiară a proiectului și îmbunătățește randamentul investiției. Practic, vorbim despre o optimizare suplimentară a investiției tuturor părților implicate.

Sinergiile sunt evidente și din perspectivă tehnică. Bateriile permit echilibrarea producției regenerabile și adaptarea la profilul de consum al centrului de date. În același timp, centrul de date oferă un consum relativ stabil, ceea ce ajută la monetizarea proiectelor energetice. Într-un astfel de model, rețeaua națională devine un partener, nu singura soluție, iar presiunea asupra infrastructurii publice este redusă.

Vedem acest model integrat ca pe un avantaj competitiv pentru România. În contextul în care Europa caută locații pentru dezvoltarea de capacități AI și cloud, disponibilitatea energiei verzi la scară mare este un criteriu esențial. Dacă putem oferi investitorilor un pachet integrat – teren, energie regenerabilă, stocare și soluții rapide de conectare – atunci putem transforma hub-urile energetice în poli digitali.

Pentru Monsson, această extindere nu înseamnă ieșirea din zona noastră de expertiză, ci valorificarea ei într-un mod mai complex și mai eficient. Credem că viitorul infrastructurii critice este unul integrat, iar combinația dintre energie, baterii și centre de date reprezintă un pas logic în această direcție.

DCF: Dezvoltarea unui centru de date pilot de 50 MW în România este un demers ambițios. Care sunt principalele asemănări și diferențe între dinamica investițiilor în energie regenerabilă și cea în centre de date – din perspectivă de reglementare, finanțare și scalabilitate?

Sebastian Enache: Dezvoltarea unui centru de date pilot de 50 MW este, într-adevăr, un pas ambițios, însă din perspectiva noastră el se bazează pe o fundație solidă. Capacitatea de conectare la rețea de 50 MW o avem deja prin proiectul nostru hibrid de la Mireasa, unde operăm 50 MW eolian, 35 MW fotovoltaic și aproximativ 200 MWh de baterii. Această combinație ne oferă condiții ideale pentru a susține un centru de date cu consum mare, în mod sigur și pe termen lung.

Din perspectiva asemănărilor, atât investițiile în regenerabile, cât și cele în centre de date sunt intensive în capital, depind de accesul la rețea și necesită predictibilitate legislativă și contractuală pentru a fi finanțabile. În ambele cazuri, investitorii caută stabilitate a cadrului de reglementare, vizibilitate asupra veniturilor și un orizont clar de dezvoltare.

Diferențele apar în dinamica tehnologică și în cadrul de reglementare. Energia regenerabilă beneficiază astăzi de un cadru legislativ matur, în timp ce pentru centrele de date nu există încă o legislație specifică sau zone clar definite unde dezvoltarea să fie prioritizată. De aceea, ne dorim să colaborăm activ cu autoritățile pentru a contribui la crearea unui cadru predictibil și competitiv.

Ne implicăm în această zonă pentru a sprijini dezvoltarea industriei locale de centre de date și, așa cum am fost pionieri în proiecte mari de energie regenerabilă, ne dorim să fim printre primii care construiesc un model integrat și în această industrie.

DCF: Proiectul pilot de 50 MW este doar începutul sau reprezintă un model replicabil? Ce planuri aveți pentru a transforma această inițiativă într-o platformă de extindere mai largă în regiune?

Sebastian Enache: Proiectul pilot de 50 MW nu este un demers izolat, ci începutul unui model replicabil pe care dorim să îl extindem la nivel național și regional. Strategia noastră vizează dezvoltarea unor centre de date cu capacități între 50 MW și 200 MW în 7 zone strategice ale României, printre care Constanța, Satu Mare și Arad. Alegerea acestor locații are la bază atât accesul la infrastructură energetică solidă, cât și poziționarea geografică și conectivitatea la rețelele de transport și fibră optică.

Obiectivul nostru este să construim o platformă integrată, în care energia regenerabilă, stocarea prin baterii și consumul mare – reprezentat de centrele de date – să funcționeze într-un ecosistem optimizat. Modelul dezvoltat la 50 MW poate fi scalat natural la 100 sau 200 MW, folosind aceeași logică: producție locală de energie, capacitate de stocare și infrastructură pregătită pentru extindere etapizată.

Dorim să valorificăm poziția geopolitică strategică a României. Deși în prezent majoritatea centrelor de date sunt concentrate în Europa de Vest, vedem semnale clare că Europa Centrală și de Est, inclusiv România, pot deveni un hub relevant pentru AI, cloud și infrastructură digitală. Prin această platformă, ne propunem să poziționăm România ca un punct central în noua hartă digitală a Europei.

DCF: Care sunt cele mai mari provocări pe care le anticipați în integrarea centrelor de date cu rețelele existente de energie regenerabilă și stocare – din punct de vedere tehnic, operațional sau de reglementare?

Sebastian Enache: Provocări există și vor exista întotdeauna atunci când vorbim despre infrastructură critică la scară mare. Integrarea centrelor de date cu rețelele existente de energie regenerabilă și stocare aduce atât oportunități, cât și provocări tehnice, operaționale și de reglementare.

Din punct de vedere tehnic, principala provocare este capacitatea rețelei și gestionarea fluxurilor de energie într-un mod stabil și predictibil. Centrele de date, în special cele dedicate AI sau cloud, au un consum mare și constant, iar rețeaua trebuie să poată susține aceste sarcini fără a crea dezechilibre. Totuși, un avantaj major este că proiectele regenerabile existente sunt deja racordate și, în multe cazuri, includ sisteme BESS. Acest lucru creează premisele pentru co-locarea centrelor de date lângă capacități de producție și stocare, reducând presiunea asupra infrastructurii publice și oferind o soluție mai eficientă decât în multe alte țări.

Operațional, provocarea este integrarea inteligentă a producției regenerabile intermitente cu profilul de consum al centrului de date. Aici intervin bateriile și sistemele avansate de management al energiei, care pot asigura flexibilitate, redundanță și optimizare a costurilor.

Din perspectivă de reglementare, autorizarea rămâne un punct critic. În prezent, nu există un cadru dedicat care să trateze integrat dezvoltarea de centre de date în proximitatea hub-urilor energetice. Este nevoie de claritate, predictibilitate și proceduri accelerate pentru proiectele strategice.

În ceea ce ne privește, venim cu o parte din soluție: capacitate de racordare deja disponibilă, tehnologie matură, investiții asumate și teren pregătit pentru dezvoltare. Restul depinde de colaborarea cu autoritățile și de apetitul investitorilor de a valorifica această oportunitate.

Liquid cooling is becoming the standard in AI data centers, with adoption rising from 14% in 2024 to 33% in 2025, and it is expected to reach 40% in 2026, according to Trendforce cited by Accenture. In the near future, data centers will use a combination of air-based and liquid-based cooling, while water-intensive evaporative methods will be phased out due to sustainability concerns and water scarcity.

Liquid cooling is becoming the standard in AI data centers, with adoption rising from 14% in 2024 to 33% in 2025, and it is expected to reach 40% in 2026, according to Trendforce cited by Accenture. In the near future, data centers will use a combination of air-based and liquid-based cooling, while water-intensive evaporative methods will be phased out due to sustainability concerns and water scarcity. Sustainability has become a central principle in data center design, influencing everything from prioritizing low-carbon materials and modular construction to water management, heat reuse, and integration of renewable energy.

Sustainability has become a central principle in data center design, influencing everything from prioritizing low-carbon materials and modular construction to water management, heat reuse, and integration of renewable energy.